You interact with large language models (LLMs) every day, whether you’re asking ChatGPT for advice, searching through Perplexity, or using voice prompts in Gemini. But have you ever wondered if you can influence what these AI systems say about your brand, products, or industry? That question lies at the core of LLM optimization.

LLMO is the practice of structuring your digital presence so that generative AI models can easily retrieve, trust, and reuse your content in their responses. You’re no longer optimizing for keyword ranking on a search results page; you’re optimizing for visibility inside the model’s reasoning and retrieval pipeline. It may sound futuristic, but it’s already happening.

When executed well, LLMO doesn’t just make you discoverable. It makes you recommendable, something a generative AI SEO agency often helps brands achieve.

How Digital Content Strategies Influence LLM Outputs

Every time your content is indexed, structured, and contextually strong, it shapes how AI systems interpret and surface information. The real challenge is learning how to consistently and ethically influence those outputs.

AI models like ChatGPT, Bing Chat, and Google Gemini draw from a blend of sources. Some use live web data. Others rely on curated retrieval indexes or prioritize citations from high-trust domains. However, all of them are engineered to deliver fast and reliable answers. As a result, they reward content that demonstrates clear intent, strong structure, and high contextual relevance.

In practice, that means your content strategy can increase your chances of being retrieved, cited, or paraphrased by AI systems, not through manipulation, but through alignment.

How LLMs Retrieve Information

To optimize effectively, you first need to understand how large language models interact with your content. They don’t “read” pages the way humans do. They rely on:

- Retrieval-Augmented Generation (RAG) systems that pull snippets from indexed sources.

- Semantic embeddings that help models match user intent with stored concepts.

- Entity recognition to detect and trust specific people, brands, and products.

- Structured signals, such as schema markup and clean site architecture.

When you publish a new page, it enters a world of AI retrievers and ranking signals. If your content is slow, vague, or poorly structured, you fall off the radar. But if you deliver crisp answers aligned with real-world prompts, your chances of being surfaced increase dramatically.

What Makes Content “AI-Ready”?

You need more than traditional SEO to thrive in AI search. To become “AI-ready,” your content must check four essential boxes:

- Machine-Readable: Use structured data (like FAQ schema or product markup) that helps AI systems parse and prioritize.

- Prompt-Aligned: Write content that answers common user queries exactly as they’re phrased.

- Entity-Rich: Establish clear associations with brands, people, and products that LLMs recognize.

- Intent-Accurate: Deliver immediate value based on the searcher’s goal, not just the keyword used.

These principles don’t just help with ChatGPT or Gemini. They help with all AI systems, from Claude to Copilot, that rely on a blend of retrieval logic and natural language understanding.

The Role of Trust, Relevance, and Schema

You may assume that if your site is indexed by Google or Bing, you’re automatically eligible for inclusion in AI-generated responses. But generative AI filters aggressively for trust, clarity, and domain relevance.

Trust is established through signals such as backlink profiles, brand mentions, and user engagement. Relevance depends on how well your content aligns with user prompts. And schema markup helps AI systems digest your content structure at speed.

For example, a product page without a clear schema may be skipped in favor of a competitor’s structured page, which is more likely to be found and indexed. An FAQ page without context may be ignored in favor of a page that mimics real prompt phrasing. Structured content sends a message: “This page solves problems. Fast.”

Ethical Considerations in Influencing AI

There’s a fine line between optimization and manipulation. Ethical LLMO means:

- Being transparent with users about your intent and expertise.

- Avoiding misinformation, fake reviews, or keyword stuffing.

- Prioritizing clarity, context, and usefulness over gaming the system.

Generative AI has a memory, both literal and reputational. If your content misleads or degrades user trust, it may get suppressed or deprioritized over time.

The best influence comes from earning your place, not tricking your way in.

Shaping the Future of Search

You can’t control what every AI system says. But you can influence which sources they trust, retrieve, and represent. LLM optimization puts you in the driver’s seat so you don’t just rank. You reason. You don’t just advertise. You advise.

If you want to start showing up in AI answers across ChatGPT, Gemini, and Perplexity, this is your moment. Align your strategy now, and you won’t just follow the future of search; you’ll shape it.

FAQs

What is LLMO?

How do LLMs retrieve and process content?

How does trust impact my AI visibility?

Why is structured data important for AI optimization?

Can I manipulate AI systems to get my content referenced?

How does LLMO impact my SEO strategy?

Can I influence how AI systems view my brand or product?

How will LLMO change SEO in the future?

How can I start optimizing my content for AI search today?

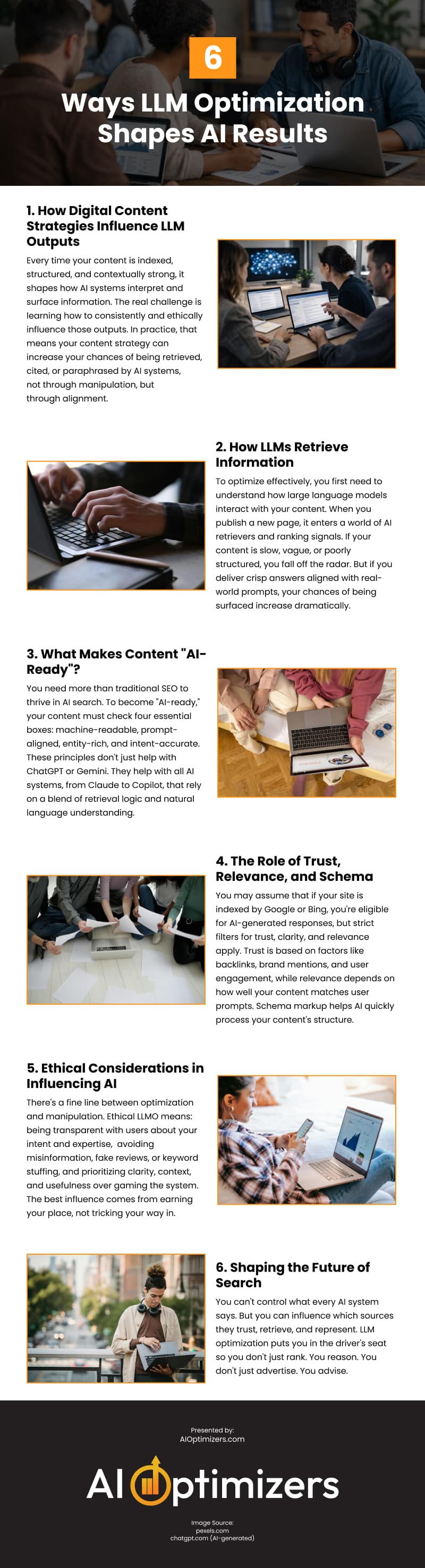

Infographic

Large language models greatly impact how people discover and evaluate brands through tools like ChatGPT, Perplexity, and Gemini. Therefore, LLM optimization aims not only to improve brand visibility but also to enhance how “recommendable” a brand is in responses. Read on to discover how LLM optimization influences AI results.